OpenClaw-Inspired Livesite incident triaging app with Fabric & GitHub Copilot CLI

I was on-call for livesite duty the last couple of weeks and getting blasted with flaky incidents.

These incidents come from both old and new code and do not have clear ownership assignment - this information is buried in git breadcrumbs somewhere, which could have gone through several refactorings. The original authors could also have left the company, and a "soft ownership handover" would have been done to someone who has never touched that code since.

This results in a textbook definition of what we call toil. By Google SRE definition:

If you're performing a task for the first time ever, or even the second time, this work is not toil. Toil is work you do over and over. If you're solving a novel problem or inventing a new solution, this work is not toil.

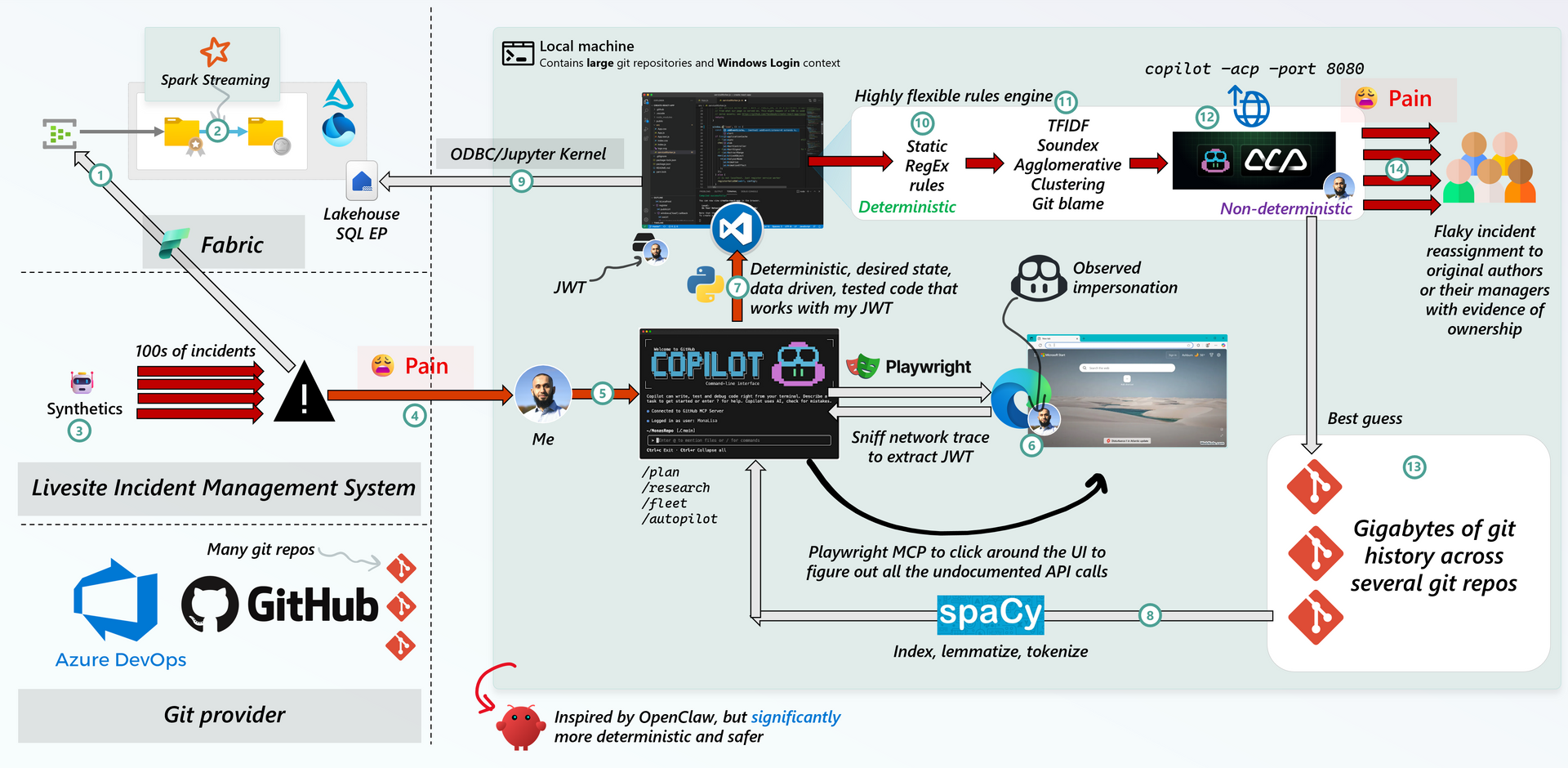

OpenClaw is fascinating for a single reason: it can impersonate you and has access to your local filesystem, which contains personal information.

I wanted to take this concept and see if I could apply it to my situation, without actually using OpenClaw.

Basically, my takeaway is, if you can combine:

- Traditional desired state/long-running apps

- Browser automation to rapidly extract API signatures and turning it into handler code

- Unfettered access to real-time telemetry and source-of-truth APIs

- Some good old Machine Learning via deterministic Natural Language Processing

- The ability to invoke state of the art LLM models that have access to your local filesystem

You can effectively solve any problem while having a ton of fun doing it 😁

The OpenClaw-inspired system below was mostly vibe coded in Python (due to the rich set of NLP libraries) with a couple manual tweaks here and there.

I just thought up creative ideas, validated the assumptions with /research mode, and used /fleet mode to generate functionally correct code extremely rapidly (not production ready, but it drives the point home extremely well), while dealing with livesite incidents:

The incident management system sends event data to an Event Hub (Fabric EventStream or regular Event Hub)

The data is multiplexed tables, with extremely nested JSON schemas. We use Spark Streaming to make quick work of parsing it into Delta Tables with schema-on-read and recursive explosions.

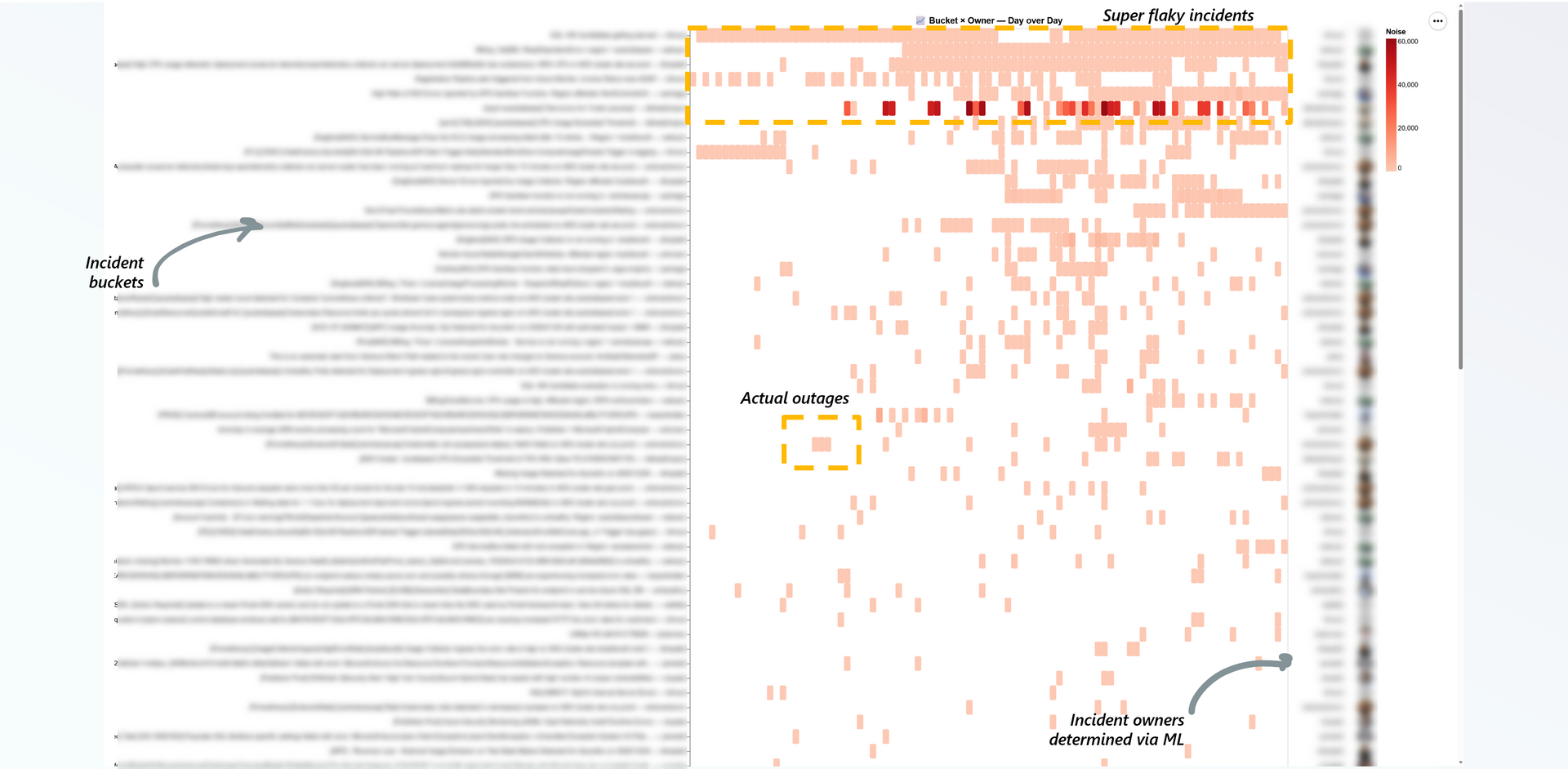

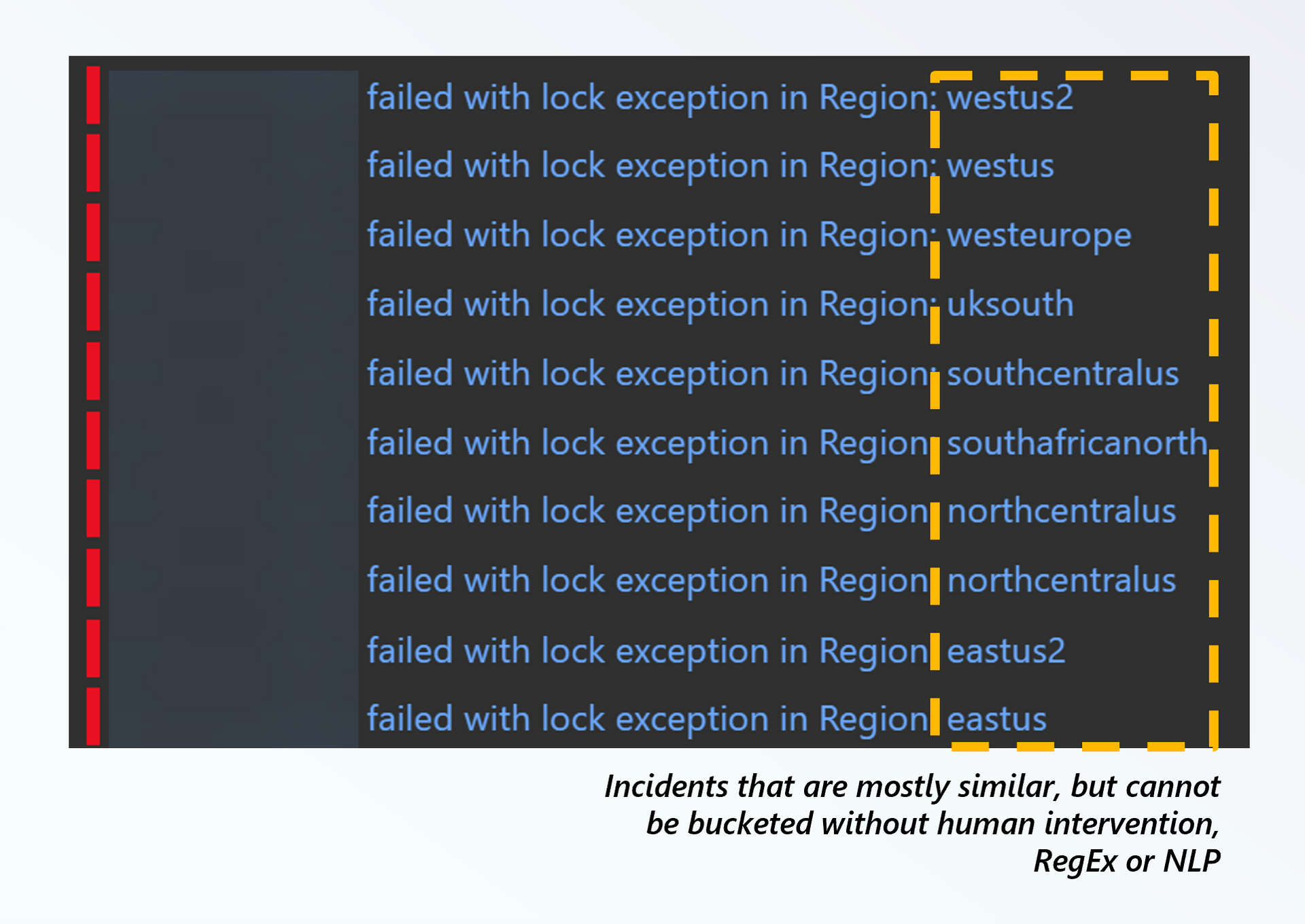

I go on-call, and a barrage of 100s of incidents come to the incident queue. They're mostly similar looking, but are sometimes bound to different regions or have slightly different root cause symptoms (e.g.

lock exception), so you cannot group them into one bucket without using your eyes:

Incidents that should be bucketed to significantly reduce noise Self-explanatory, I receive 100s of TODOs as email notifications. Textbook toil.

After I got sick of trying to manually group/bucket/triage, I reached for GitHub Copilot CLI to throw together a Python project with a Marimo Notebook entrypoint.

The incident management system we use has a rich set of undocumented APIs that interface with the browser. To figure out the AuthN and the API signatures, I pointed Copilot CLI against microsoft/playwright-mcp.

This allowed it to very rapidly click around the UI at the hyperlinks I hinted it towards, and figure out all the API calls required to extract data out of the site using paging, and convert it into Pandas DataFrames. The data size is small, and Pandas works with all ML libraries, speed isn't relevant here, so it's a no-brainer.

Using playwright package with Python bindings, the app is able to launch a headless browser to refresh the token. It is effectively long running with a self-healing token refresh mechanism (I left it on for a week in an infinite loop).

Before the app launches, GitHub Copilot builds an index of the code by pivoting on code authors per file, see

git blame --porcelaindocs.It wasn't very sophisticated - just enough to get by, a simple python program that basically reads code files as text, tokenizes and lemmatizes it (remove stopwords). This was basically an attempt to build a glorified lookup dictionary of human authors to files to terms.

The key here is, since Copilot and Python have access to Gigabytes of source code and git history on my NVMe backed hard drive, iterating on this algorithm in

/fleetmode across CPUs was extremely fast. I added some unit tests to place rigid rules around what I know to be the correct ownership, and Copilot iterated around the test case to tune the algorithm until it passed.This is basically a poor man's test/train split.

Various telemetry data, including service logs and incident telemetry is available on Fabric, which the app queries via the new Microsoft ODBC Driver for Microsoft Fabric Data Engineering (Preview).

We opt for the Lakehouse endpoint as the Spark SQL's surface area for Regex, JSON and string manipulation covers a wider surface area than Fabric SQL Endpoint (albeit the latter is often faster at what it does best). We can also use Catalyst Functions and UDFs to expose regular code as SQL functions, see postgres/teradata functions for Spark.

For terminology we for sure know is owned by an individual, we assign it with 100% determinism via good old RegEx.

Then, for lesser confidence but still 100% deterministic behavior, we apply TFIDF, Soundex and Agglomerative clustering.

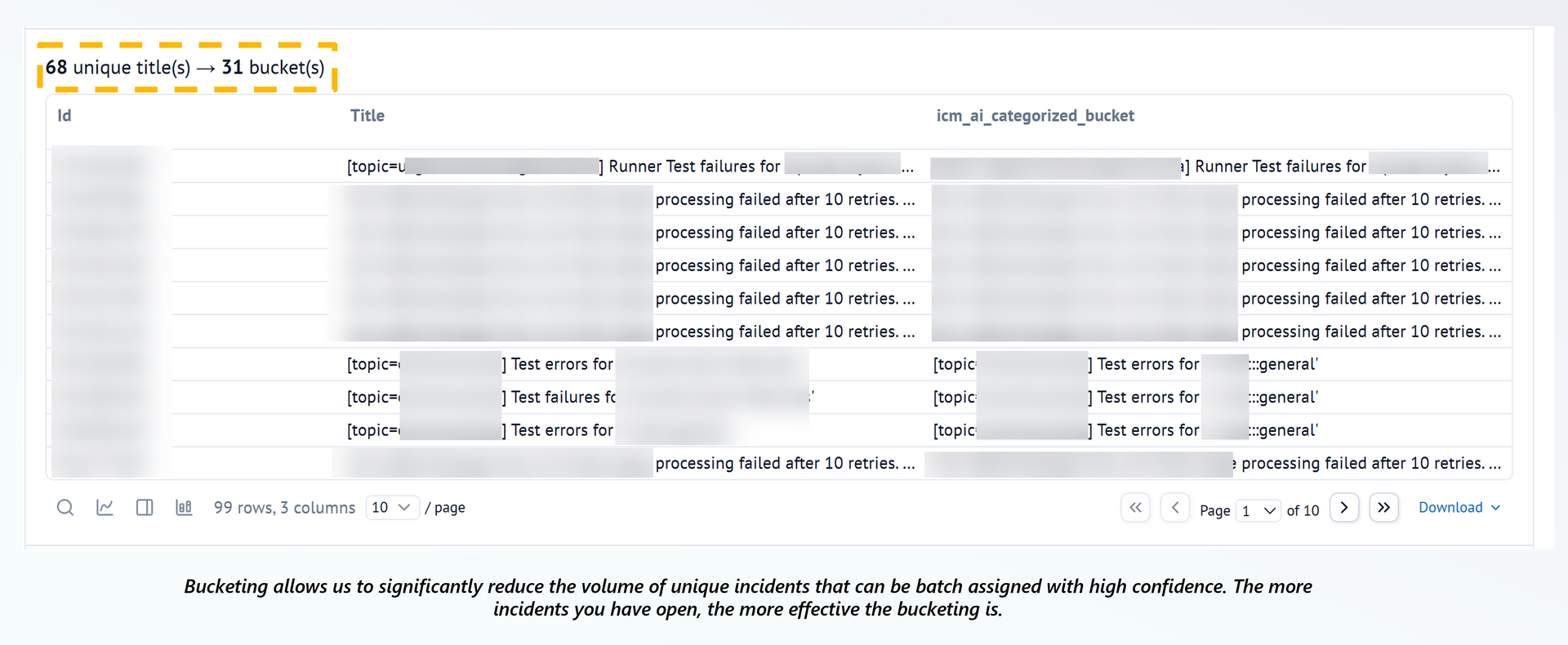

This effectively allows us to bucket the incidents above:

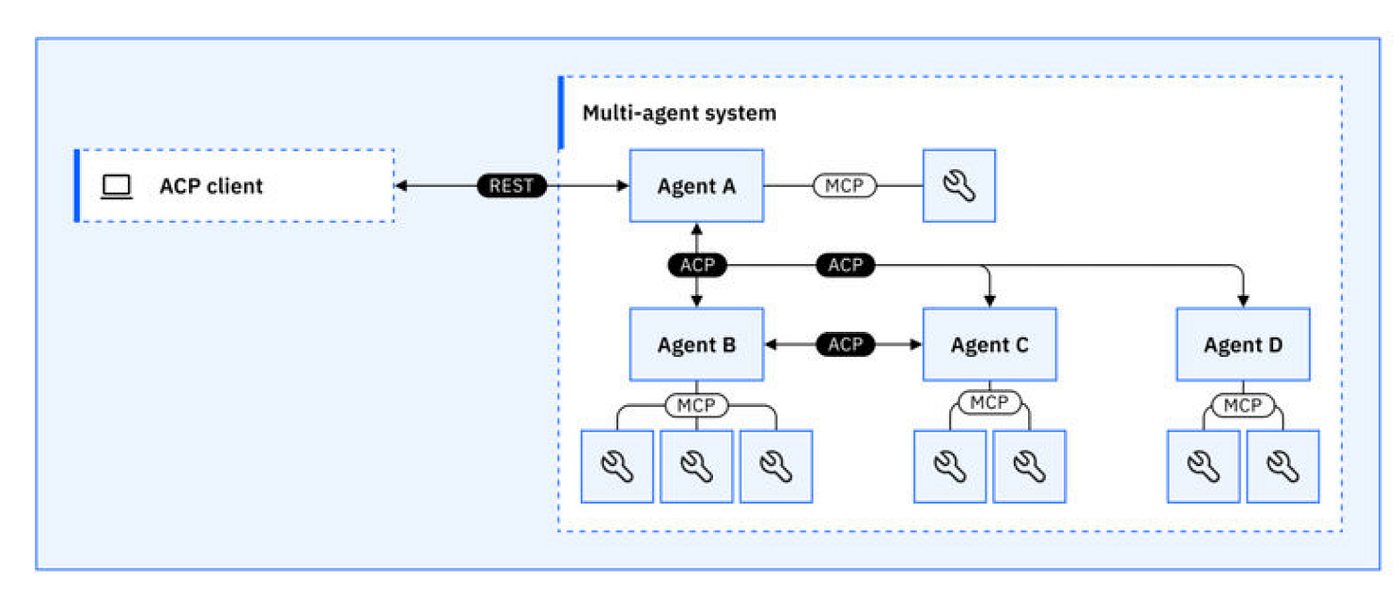

The super fun part. For incidents where you have no idea what to do via ML, you can launch Copilot CLI as an HTTP server via ACP support.

What that means is, since Copilot CLI is hooked up to all your organization's MCP servers AND your local filesystem, your app can just communicate with it like a regular HTTP API, with access to several cutting edge models alongside the context:

copilot --acp --port 8080

Running Copilot CLI in ACP mode so you can give your traditional ML Python app superpowers when deterministic ML doesn't work The Copilot CLI is hooked up with several MCP servers it can reach out to based on the prompt, including searching through and reasoning over git.

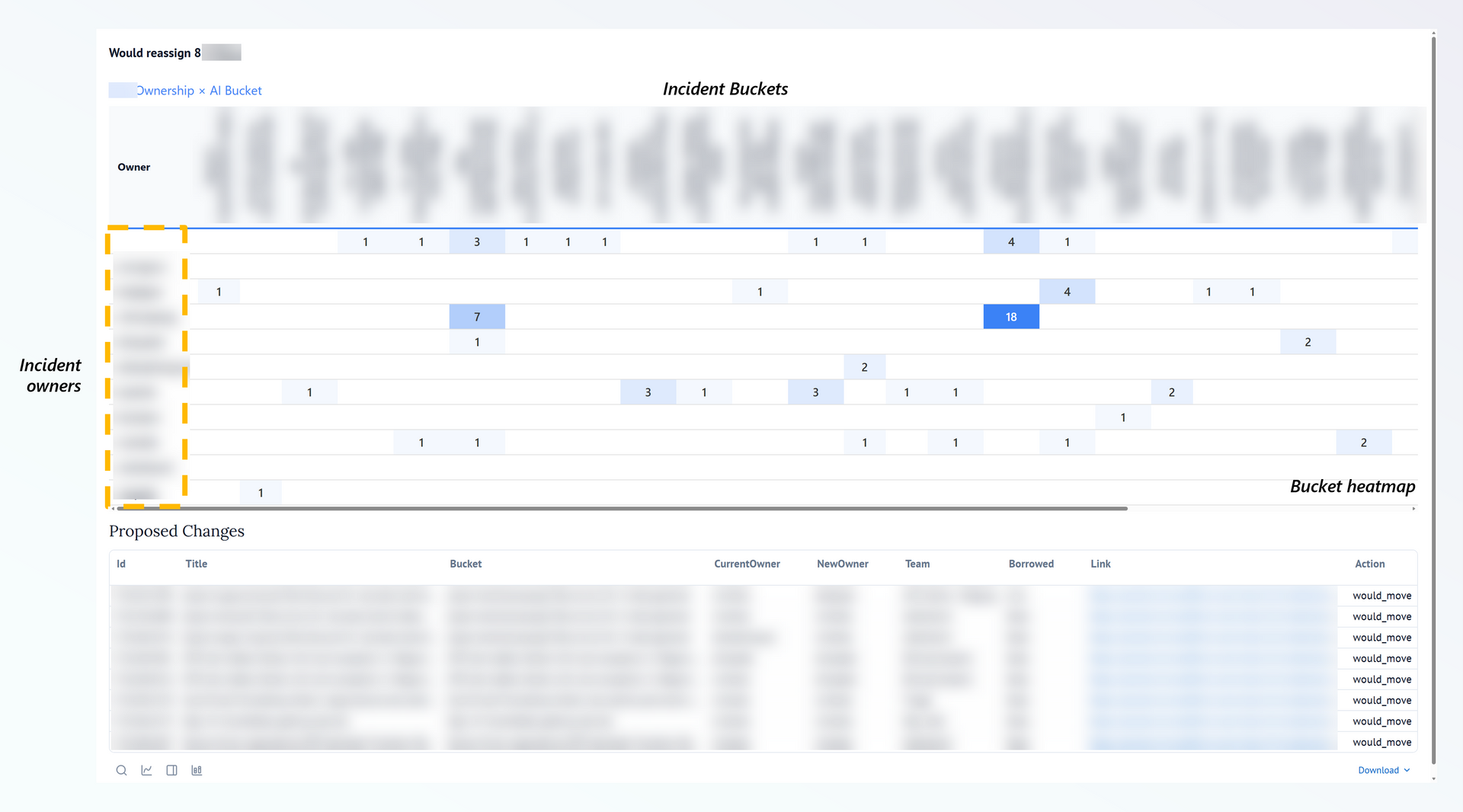

File the incident against the rightful owner per bucket, with a rich visualization of time series trends:

Code

Since most of the business logic is tied to the internal incident management system, I can't share the whole app.

But the most interesting bit is the bucketing algorithm, see incident-bucketing.py. For a simple but effective Lakehouse ODBC parallel query client, see fabric-lakehouse-odbc.py.

Verdict

I gave the app a cheeky little name - "The Janitor":

I'm working on containerizing it and taking it into Production to significantly reduce toil. We can also have the Copilot CLI spawn sub-agents to triage buckets asynchronously and attempt to fix the code/synthetic bug.